As the scale and complexity of artificial intelligence applications continue to increase, data center infrastructure is facing unprecedented pressure. Today, training large-scale models requires thousands of GPUs to collaborate in parallel and handle massive amounts of data exchange in real time. In this context, network performance is no longer merely a supporting element, but a core factor determining the overall system efficiency. This is precisely the key value of the 400G OSFP SR4 optical module, which provides the high-bandwidth, low-latency interconnect capabilities required for the expansion of modern AI clusters.

The Networking Challenge in AI Training Clusters

AI training clusters heavily rely on distributed computing. Tasks involving model parallelism and data parallelism require GPUs to exchange gradients, parameters, and intermediate computation results continuously. This generates significant east-west traffic within the cluster, placing extremely high demands on the underlying network.

In such scenarios, traditional network speeds quickly become bottlenecks; even 100G links often struggle to meet the communication needs of high-performance GPUs. Therefore, 400G connectivity has become the new benchmark for AI infrastructure, ensuring that computing resources are fully utilized without being constrained by network performance.

Meeting GPU Bandwidth Demands

Modern GPUs possess extremely high internal memory bandwidth and processing power, but without a matching network connection, a significant amount of performance is wasted due to communication latency.

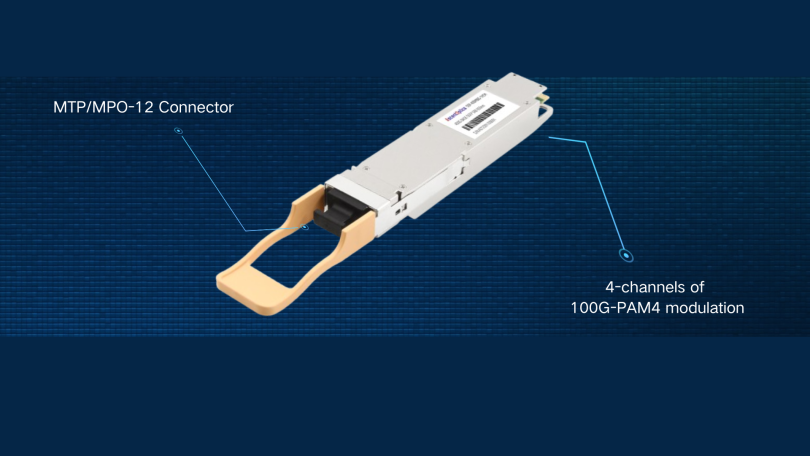

The 400G OSFP SR4 module effectively solves this problem by providing ultra-high bandwidth connections between switches and GPU servers. This module utilizes parallel optical channel transmission based on multimode fiber and is specifically optimized for short-distance, high-density applications such as AI clusters. It accelerates data exchange between nodes, shortens training time, and improves overall system efficiency.

In practical deployments, this type of module is often used to connect top-of-rack (ToR) switches to GPU servers or to interconnect switches within the same cluster. Its stable, high-speed transmission performance over short distances is ideal for highly coupled computing cluster environments.

Enabling InfiniBand NDR Performance

InfiniBand NDR is one of the key supporting technologies in building modern high-performance AI networks. It boasts a transmission rate of up to 400Gbps and was specifically designed for high-performance computing and high-load AI scenarios from the outset. InfiniBand’s core advantages lie in its ultra-low latency and ultra-high throughput, while also incorporating advanced features such as RDMA (Remote Direct Memory Access), enabling direct memory interaction between nodes without CPU intervention in scheduling.

400G SR4 modules are a natural fit for InfiniBand NDR deployments. They support the required data rates while maintaining the signal integrity and reliability needed for mission-critical AI training tasks. When paired with high-performance network interface cards and switches, they help create a fabric that can efficiently handle the demanding communication patterns of large-scale AI workloads.

Optimized for High-Density Data Centers

AI clusters are typically deployed in high-density data center environments, where space, power, and cooling are tightly constrained. OSFP form factor modules are designed with these challenges in mind, offering improved thermal performance and higher power budgets compared to earlier form factors.

Combined with the lightweight and flexible nature of multimode fiber, 400G SR4 modules enable clean and efficient cabling in dense racks. This not only improves airflow and cooling efficiency but also simplifies installation and maintenance—both critical factors in large-scale deployments.

Conclusion

As AI continues to drive exponential growth in data center performance requirements, the importance of high-speed, low-latency networking cannot be overstated. 400G OSFP SR4 modules play a vital role in enabling scalable AI cluster architectures by delivering the bandwidth and reliability needed for GPU-intensive workloads. By supporting InfiniBand NDR and optimizing short-reach, high-density connectivity, they have become a foundational component in the next generation of AI infrastructure.